Kubernetes

Container Orchestration

Kubernetes (often abbreviated as K8s) is an open-source container orchestration platform designed to automate the deployment, scaling, and management of containerized applications.

Originally developed by Google and now maintained by the Cloud Native Computing Foundation (CNCF), Kubernetes has become the de facto standard for container orchestration in production environments.

In the context of TDP Kubernetes, the Kubernetes platform is used as the foundation for deploying all components of the cloud-native edition of TDP, replacing the traditional bare-metal and virtual machine approach used in the TDP Datacenter edition.

Why Kubernetes?

Adopting Kubernetes as the deployment platform for TDP brings several benefits:

- Automatic scalability: components can scale horizontally according to demand, without manual intervention

- High availability: Kubernetes ensures that components are automatically redistributed in the event of a node failure

- Declarative deployment: the entire infrastructure is described as code (YAML), enabling versioning and reproducibility

- Resource isolation: each component runs in its own container, with defined CPU and memory limits

- Zero-downtime updates: rolling updates and rollbacks are native to the platform

- Rich ecosystem: integration with tools such as Helm, ArgoCD, Prometheus, and Grafana

Fundamental Concepts

Pods

The Pod is the smallest deployment unit in Kubernetes.

A Pod encapsulates one or more containers that share the same network namespace and storage.

In TDP Kubernetes, each component (such as Kafka, Trino, or Airflow) runs in one or more Pods.

| Element | Description |

|---|---|

| Containers | One or more containers in the same Pod (shared resource) |

| Network | A single IP per Pod; containers communicate via localhost |

| Storage | Volumes shared between the Pod's containers |

Deployments and StatefulSets

- Deployment: manages stateless Pods, ensuring that the desired number of replicas is always running. Used for components such as Trino, Superset, and CloudBeaver.

- StatefulSet: manages stateful Pods, providing stable network identity and persistent storage. Used for components such as Kafka, PostgreSQL, and ClickHouse.

| Aspect | Deployment | StatefulSet |

|---|---|---|

| State | Stateless (no persistent state) | Stateful (identity and volume per Pod) |

| Pod names | Random | Ordered and stable (name-0, name-1, …) |

| Scalability | Scales replicas interchangeably | Scales in order; each Pod keeps its identity |

| In TDP | Trino, Superset, CloudBeaver, Airflow, etc. | Kafka, PostgreSQL, ClickHouse, NiFi, etc. |

Services

Services expose Pods for internal (ClusterIP) or external (NodePort, LoadBalancer, Ingress) communication. In TDP Kubernetes, each component has its own Service for discovery and communication between components.

| Type | Use |

|---|---|

| ClusterIP | Access only within the cluster (default); used for communication between components (e.g. Trino → Hive Metastore). |

| NodePort | Exposes the application on a port on each node; useful for testing or clusters without LoadBalancer. |

| LoadBalancer | Exposes via the cloud provider's load balancer (or MetalLB on-premise). |

| Ingress / Gateway API | HTTP/HTTPS routing by host and path; used for UIs (Airflow, Superset, ArgoCD, etc.). |

Ingress vs Gateway API

TDP Kubernetes supports two approaches for HTTP/HTTPS exposure: Ingress and Gateway API.

The Ingress API remains supported in Kubernetes but is frozen, with no planned functional evolution. The NGINX Ingress Controller, one of the best-known implementations for Ingress resources, entered a retirement process in March 2026.

TDP Kubernetes documents both approaches, as different customer environments may use either one.

Namespaces

Namespaces provide logical isolation within the cluster. TDP Kubernetes uses a single namespace to group components; in the documentation this namespace is indicated as <namespace>.

The <namespace> is defined by the user. It may be, for example, tdp-ingestao, tdp-financeiro, departamento-pessoal, or any other name. The -project suffix in examples such as tdp-project is only illustrative in this documentation; the user chooses the name they want.

Persistent Volumes

Persistent Volumes (PV) and Persistent Volume Claims (PVC) provide persistent storage for components that need to retain data across restarts, such as databases (PostgreSQL, ClickHouse) and messaging systems (Kafka).

The PVC is the storage request made by the Pod (for example, "I need 10 Gi in ReadWriteOnce"); the cluster fulfils this request by binding the PVC to an existing PV or by dynamically provisioning a new volume through a StorageClass (for example, local-path or cloud provisioners).

The figure below illustrates this flow: the Pod references a PVC; the PVC is fulfilled by the StorageClass, which provisions the volume on the node's physical disk (or on external storage, depending on the configured StorageClass).

ConfigMaps and Secrets

- ConfigMaps: store non-sensitive configuration in key-value format, used to parameterize components

- Secrets: store sensitive data such as passwords, tokens, and certificates in encrypted form

| Resource | Typical content | Use in TDP |

|---|---|---|

| ConfigMap | URLs, endpoints, flags, configuration files | Connection parameters (e.g. Trino catalogs), non-sensitive configs |

| Secret | Passwords, API keys, TLS certificates | Database credentials, OCI registry, LDAP, tokens |

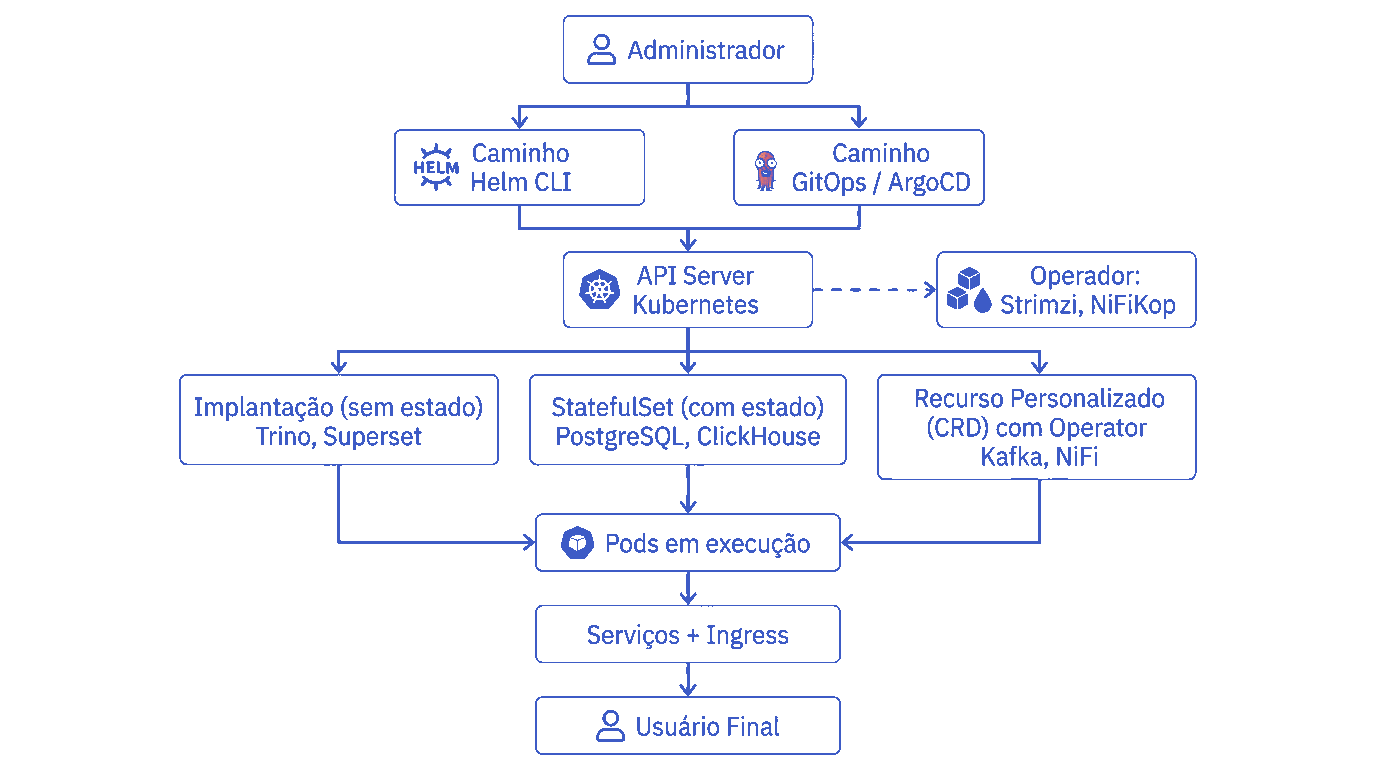

TDP Kubernetes Deployment Model

Helm Charts

Helm is the Kubernetes package manager, and TDP Kubernetes uses Helm Charts to package, configure, and install each platform component.

Each chart contains:

- Kubernetes resource templates (Deployments, Services, ConfigMaps, etc.)

- Configurable default values (

values.yaml) - Dependency declarations between charts

- Metadata and documentation

In TDP Kubernetes, Helm is generally adopted for components whose lifecycle can be adequately handled by native Kubernetes resources, with less need for specialized operational automation.

TDP Kubernetes charts are available in the Tecnisys OCI registry (registry.tecnisys.com.br) and follow the naming convention tdp-<component> (for example, tdp-kafka, tdp-trino, tdp-airflow).

OCI Registry

In TDP Kubernetes, artifacts such as Helm Charts and container images can be distributed through an OCI-compatible registry. This model standardizes the storage, versioning, and distribution of packages used by the platform.

Using a centralized registry facilitates:

- controlled publication and distribution of artifacts;

- consistent versioning of charts and images;

- integration with installation and automation flows;

- support for CLI-based and GitOps approaches.

Details on authentication, registry access, and operational commands should be consulted in the installation sections.

Operators

Operators extend Kubernetes to automate the management of applications that require more specific operational logic. Beyond installation, they allow continuous monitoring, reconciliation, and administration of the application state.

In TDP Kubernetes, this approach is typically used for components with greater operational complexity, especially when there is a need for more advanced automation around persistence, updates, or failure recovery.

Deployment Strategy: Helm and Operators

The TDP Kubernetes deployment strategy is defined according to the operational complexity of each service. To this end, the platform may adopt different approaches with Helm and Operators, depending on the profile of the deployed component.

In general:

- simpler, ephemeral, or predominantly stateless services tend to be deployed with Helm;

- services with greater operational complexity, data persistence, or advanced automation requirements may be deployed with Operators.

ArgoCD and GitOps

GitOps

GitOps is an operational model in which the desired state of the environment is defined declaratively in Git repositories. Changes become versioned, auditable, and continuously reconciled in the cluster.

This approach favours greater operational predictability, change traceability, and reduction of direct manual changes to the environment.

GitOps Principles

The core principles of GitOps include:

- Single source of truth: the desired state of the platform is maintained in Git repositories;

- Declarative configuration: resources are defined by versionable manifests and files;

- Versioning and traceability: every change is recorded and auditable;

- Automatic reconciliation: the environment is continuously compared with the desired state and adjusted when necessary;

- Predictable operation: deployments, changes, and rollbacks follow a controlled and reproducible flow.

ArgoCD

ArgoCD is a declarative delivery tool based on GitOps principles. In this model, the desired application state is described in Git repositories, and the environment is continuously reconciled with that state.

In TDP Kubernetes, this approach enables:

- change traceability;

- greater deployment predictability;

- declarative control of environments;

- reduction of direct manual changes to the cluster.

TDP Kubernetes Architecture

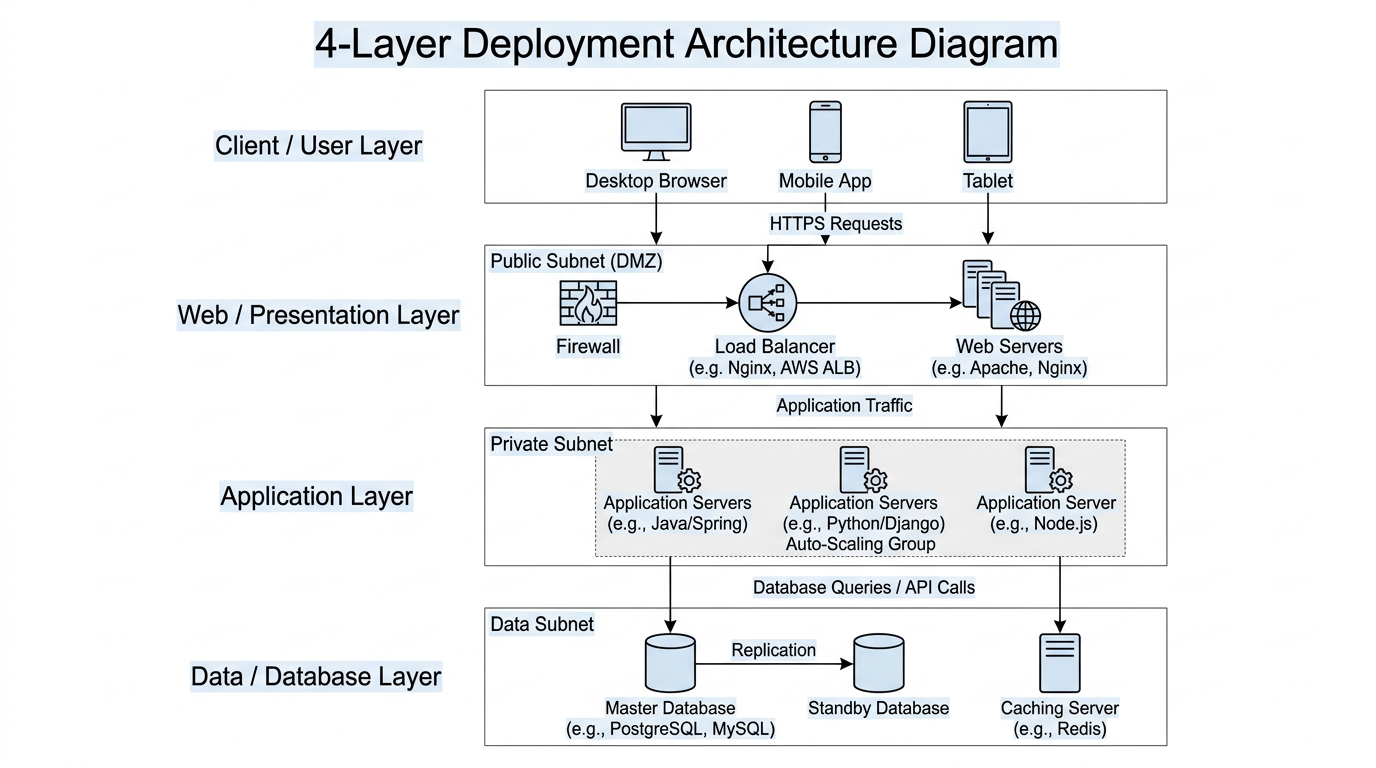

The TDP Kubernetes architecture can be viewed from two complementary perspectives.

The first presents an overview of the solution deployment, including the origin of artifacts, the Kubernetes cluster, exposure mechanisms, and integration with storage.

The second details the functional organization of the platform components, grouping services by logical responsibility layers.

The TDP Kubernetes edition organizes its components into logical layers, according to the function performed by each service within the platform.

| Layer | Components | Function |

|---|---|---|

| Platform Infrastructure | ArgoCD, PostgreSQL | Platform operation support, management, and shared services |

| Messaging | Apache Kafka | Event streaming and data integration |

| Metadata and Table Storage | Hive Metastore, Delta Lake, Apache Iceberg | Metadata and open table formats |

| Object Storage | Apache Ozone | Distributed object storage, S3-compatible alternative for on-premise environments |

| Security | Apache Ranger | Access control and security policies |

| Ingestion | Apache NiFi | Data ingestion and transformation |

| Processing | Apache Spark, Trino | Batch processing, streaming, and SQL queries |

| Orchestration and Development | Apache Airflow, JupyterHub | Pipeline orchestration and notebooks |

| Analytics and Access | ClickHouse, Apache Superset, CloudBeaver | OLAP, visualization, and data administration |

| Governance | OpenMetadata | Data catalog and lineage |

Platform Operation

The TDP Kubernetes platform can be understood in a flow from the definition of the desired state to the execution of components and the final consumption of deployed services.

1. Control and Deployment

Deployment and environment management can take place through different approaches, depending on the operational model adopted:

- TDPCTL (recommended): automation tool used to reduce manual configuration and deployment steps.

- Argo CD with Git: GitOps flow in which the desired state of the environment is maintained in a Git repository and continuously reconciled in the cluster.

- Deploy from versioned artifacts: components can also be deployed from charts and images made available in an OCI-compatible registry.

The operational details of these approaches are described in the installation and configuration sections.

2. Artifact Distribution

Container images and Helm Charts for the platform are distributed through an OCI-compatible registry.

This model enables:

- centralized versioning of artifacts;

- standardized distribution of images and charts;

- integration with automated installation and GitOps flows;

- access control according to the environment.

Registry address, authentication, SSO, and access procedures should be addressed in the prerequisites and installation pages.

3. Component Execution

After deployment, the Kubernetes cluster runs the platform components as containerized workloads, using native scheduling, network, scalability, and persistence mechanisms.

In this model:

- stateless components tend to be managed by resources such as Deployments;

- stateful components tend to use StatefulSets and persistent volumes;

- communication between components takes place through Services;

- exposure of web interfaces can be done through mechanisms such as Gateway API or other implementations supported by the environment.

4. Data Persistence and Access

The platform's data layer may use external storage services or resources provided by the solution itself, depending on the environment architecture.

In general:

- S3-compatible services may be used when they already exist in the infrastructure;

- in on-premise scenarios or without a compatible external storage, Apache Ozone can act as an object storage layer;

- components that require persistence use Persistent Volumes and Persistent Volume Claims, according to the StorageClass available in the cluster.

This model preserves deployment flexibility and compatibility between the components of the stack.

Next Steps

To continue with the adoption or operation of TDP Kubernetes, see also: